cpp operating-systems deadlock-detection cpu-scheduling priority-scheduling deadlock-avoidance djikstra-algorithm. Deadlock is a situation that occurs in Operating System when any Process enters a waiting state because another waiting process is holding the demanded resource.

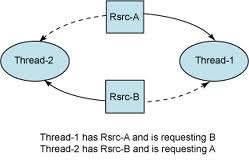

Let's first understand what is Deadlock in Operating System. The only way out of a deadlock is for one of the processes to be terminated. The project titled ROAD CONSTRUCTION USING HIGHWAY PLANNING AND OBSTRUCTION PREVENTION aims to address one of the major issues that the Indian road construction department is facing. Deadlock Avoidance is a process used by the Operating System to avoid Deadlock. This results in a standoff where neither process can proceed. All customers are fulfilled its needs like getting the loan from the bank and no customer waits. A deadlock occurs when 2 processes are competing for exclusive access to a resource but is unable to obtain exclusive access to it because the other process is preventing it. If we see real-life example then customers in the bank are similar to processes on the computer. There are also ways by which we can prevent deadlock. In that case, the process runs and all resources allocated to that process and it is a non-preemptive process. In banker algorithm, we calculate the resources needed by the process before it goes in the run state. So bank works like that way in which all customers satisfied. In a bank there are customers and the bank gives the loan. Useful ways to avoid and minimize SQL Server deadlocks Try to keep transactions short this will avoid holding locks in a transaction for a long period of time. There is also banker algorithm used to avoid deadlock. So we prefer the safe state to avoid deadlock. In the unsafe state, there are multiple processes running and requesting resources that may cause a deadlock to occur. In that case, all processes requesting resource will be fulfilled as no other process is running at a time. Safe state is that in which we run processes in sequence. There are some techniques used to avoid deadlocks. The proposed technique of this paper permits the formation of multiple critical sections in single- and multi-server-based distributed systems, and hence, it prevents the processors from entering into inoperative state which leads to increase in overall throughput of system.Deadlock is a state in which a process is waiting for the resource that is already used by another process and that another process is waiting for another resource.

Here, an efficient and collective solution by including priority with voting-based approaches and certain other constraints like handling non-maskable interrupts and using shortest job sequencing first scheduling techniques will be useful for further improving the performance of the distributed system. In order to solve the concurrency and starvation control-related problems of distributed systems, we have presented the voting-, priority- and non-maskable interrupt-based optimal and feasible solution in this research work. Hence, leaving the other large number of lower-priority processes in the waiting queue may result a significant increase in the length of waiting queue. In the priority-based distributed mutual exclusion (MUTEX) algorithms, the process having uppermost priority among all the processes of distributed system is permitted to enter into the CS. But, this particular technique has a shortcoming that if no process receives majority of votes, then the whole system will stay out in idle state, while a substantial amount of minority processes will continue in waiting queue. In the voting-based technique, a process receiving the greater part of votes will only be permitted to go into the critical section (CS). 625-630 ISSN: 0250-541X Subject: algorithms, employment, methodology, solutions, starvation, wills Abstract: Concurrency and starvation control had always been a problem in distributed system. Voting–Priority-Based Deadlock Prevention in Multi-server Multi-CS Distributed Systems Author: Kamta Nath Mishra, Navin Kumar Source: National Academy science letters 2020 v.43 no.7 pp. The basic problem that used to happen was that ASP.NET would limit the number of threads that it allowed anything to use and web requests were handled on I/O threads, so incoming web requests would consume all of the available threads and IO completion for outstanding (asynchronous) calls would be queued up behind the very web requests that were.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed